Text ad writing and testing is simple to understand, relatively easy to execute, yet full of many subtleties that influence success. If you want proof, just look at last weeks Text Ad Optimization Q&A series. Our experts share a wide range of opinions in their answers to 5 text ad testing questions, including more than a few disagreements and contradictions:

- What are the biggest text ad testing mistakes?

- How do pick which ads to test first?

- What factors have the greatest influence on testing?

- How important is text ad testing in overall campaign optimization?

- Have you had any surprising text ad testing results?

I’ve collected all of the answers into the Text Ad Testing Master’s Guide. You can download the PDF (no registration) read the embedded guide below. If you’re short on patience or time, I also pulled together my favorite 3 answers to each of the questions in the Best of the Text Ad Testing Q&A listed below. If you liked the series, check out The Ultimate List of PPC Ad Testing Resources. This is our latest post to celebrate the launch of Text Ad Zoom. To see that and all of the other great ClickEquations features in action, request a demo

Best of Text Ad Testing Q&A

#1 What are some of the biggest mistakes people make in text ad testing (aside from only measuring CTR changes)?. Brad Geddes: I don’t think enough people focus on Profit Per Impression. Just by choosing the lowest CPA or highest converting rate ad, does not mean you will bring in the most revenue for your account. Another mistake is not having enough data before making decisions. There are too many online calculators where you can input some very low numbers (like 15 impressions and 1 click for one ad and 10 clicks and 15 impressions for another one) and the tool will tell you that you have a winner. Although, the number one mistake is not doing it at all. Ad copy testing is so easy that everyone should always be running a few tests at any one time. Andrew Goodman: I often hear: “test only one variable at a time.” Statistically, this really makes no sense, and more than that, it’s impractical. From a statistical standpoint, if you go in and try to isolate which of two calls to action are “better,” for starters, you’re ignoring variable interactions (once anything else you want to test has to be changed, you’re now assuming the winner from the previous test would interact most favorably with the changed conditions) and you’re ignoring the opportunity costs of the other tests you could be running. People will interpret this “test little things one at a time” maxim so literally, they will take forever to optimize properly. What this approach fails to see is how blinkered it makes you. “Is ‘buy now’ or ‘buy today’ a better call to action?” Maybe they’re about the same, or maybe what you’ve just done is rule out a different style of ad that took more room talking about pricing or a third party endorsement, or some other trigger. There is absolutely nothing wrong with bolder testing of three or four very different style of ad, to see if any of these create a significantly better response. For some reason, that sounds unscientific to some people, but you don’t create marketing results by spending your time in the wrong chapters of the wrong statistics textbooks. Jeff Sexton: Well, perhaps the biggest mistake is NOT optimizing ad text – or doing some testing and then adopting a “set it and forget it” mindset. But, assuming that people are actively testing their ad text, the next biggest mistake is not thinking past the keywords to get at the searcher intention BEHIND those keywords. Behind every set of keywords are people who are searching on those keywords in response to a need, problem, or question. Optimizing ad text means writing ads that better speak to those people on the other end of the screen. So you should be looking at actual searcher queries associated with those keywords, past test results, competitive ads and landing pages, etc. in order to actively seek out an understanding of searcher mindset. Once you have that hypothesis you’ll be able to write ads on a more coherent basis and also able to interpret test results on a more scientific basis. In other words, proving or disproving a hypothesis will give you a direction on “what to try next” after each test, whether winning or losing. This will also allow you to more intelligently apply other ad writing best practices. . #2 How do you pick which text ads to test first? Jessica Niver: If I run into time constraints (can you imagine?), I focus my energy on: high CPL-high conversion ad groups, high conversion, high-competition ad groups regardless of CPL. For ecommerce clients, any ad groups with multiple sale offers that change frequently or that can be tested against one another. I’d also keep a list of ad groups that have a high seasonal/holiday bias and make sure those are focused on at the right time of year/month as well. Also low-CTR, low-quality score ad groups though those often need work on keyword-ad relevancy more than just ad text testing. Because it’s testing, the ads you add won’t always improve performance immediately. Maybe they suck and you shouldn’t use that messaging and that’s what the test shows you. So in spite of the above I try not to test in all of my high-lead or high-revenue ad groups simultaneously to maintain a performance safety zone so I don’t completely damage my clients’ shorter-term performance if something goes unexpectedly. Erin Sellnow: For regular testing, I tend to focus on my underperforming ad groups first. Ones with a low CTR or quality score, as I need to improve their performance in order to better the entire account. If I am looking to do some general experimenting though, I will look at my high traffic ad groups first, so I can get baseline results quickly. From there I can tweak the test with other ad groups, but at least I know if the general idea if going to work or not without waiting for months to get results. Tom Demers:

- Cost – Which groups are spending the largest amount? These are the areas where testing and even small percentage growth in areas like conversion and click-through rate on your ads can have a large impact.

- Opportunity for Improvement – Larger groups that have indicators of problem ads like low CTRs, low Quality Scores across the board, or low conversion rates can be good candidates for optimization. Another good thing to look at here are “internal benchmarks” or peer calculations.

- Time between test – Another thing we’ve found has been a great indication that ad copy can be working harder is when it’s been months (or years) between tests. There are really an infinite number of variations and approaches you can take to testing an ad, so a stale ad almost always offers a great opportunity to find a variation that will resonate better with prospects.

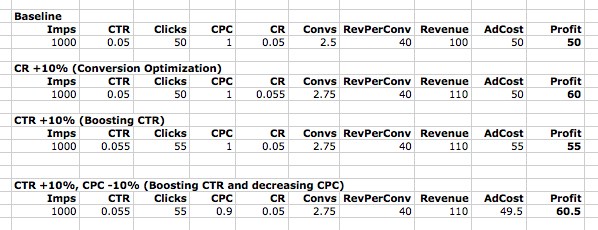

#3 In your experience, what factors have the greatest influence in testing? Brad Geddes: The headline and display URL. I find that a strong headline can compensate for a weak description line 1. However, a strong description line 1 will not overcome a poor headline. I also think display URL is not tested enough. People like to know where they are going after the click, and the display URL tells the searcher where they will end up after the click. In fact, the instant previews that just rolled out for ads also shows that Google believes in this as well. Andrew Goodman: Fit and pain points. If you’re a particular kind of roof repair company then speed of response addresses your buyer’s concerns; same if you are overnighting fresh fish or meats. If you’re a store specializing in large shoe sizes, then selection and a return policy may be the key. Overall, you often just want to “drive it down the middle of the fairway,” so to speak, with obvious messages and minor adjustments. It’s as much staying away from any off-putting verbiage or symbolism, as it is convincing people of anything in that small space. Ad position is arguably “the greatest” influence in testing, so the fact that you have the budget to reach premium position (combined with attention to CTR’s and your other campaign elements, aimed at higher Quality Score) can’t be divorced from the ad testing exercise. If you’re habitually down in positions 6-8 you may see very different testing dynamics than what you see in 1-2. Jessica Niver: Adding time-limited offers/sales, pricing in ads, offering a free anything of value (brochure, tool, etc.), prominently branding ad texts for non-branded ad groups (this has worked well for non-branded queries for better known brands). #4 How important is text ad testing in overall campaign optimization tasks? Brad Geddes: Essential. When you think of the reoccurring tasks that you must undertake in a paid search account, such as bidding, adding negative keywords, etc. – ad copy testing should be among the top tasks that are done at least monthly (the amount of testing you can do depends on how much data your account collects). When you change bids, you attain a short term gain in your account, but you will have to change bids again in the near future, so the gains are only temporary. When you do ad copy testing, the gains are long term. Brad Libby: Brad Geddes had a great blog post a couple of years ago where he laid out the basic search process, like: Impressions –> (CTR) –> Clicks –> (CR) –> Conversions –> Revenue –> Profit So, Clicks = Impressions x CTR and Revenue = Conversions x Revenue_per_Conversion I’ve attached a spreadsheet screenshot showing what I mean:  He then looked at what, say a 10% increase in Impressions would do to profit compared to a 10% decrease in CPC, and so forth, to show whether you should worry more about increasing Impressions or cutting CPC (this sort of leaves out considering which of those two things is easier to achieve). The overall lesson I took away was that searches basically a linear process – every step is necessary for searchers to move from the query to the page visit to the purchase. I love bashing Craig Danuloff when he says things like “bidding is maybe 10% or 20% of PPC”. No – if all of your bids are set optimally, then bidding is 0% of your problem. If they are all set horribly, then it’s basically 100% of your problem. So, if you’re using only 1 ad format for all of your ad groups (“Looking for [query]? We’ve got [query] at low, low prices!”), then ad testing is very important for you. If you’re doing it well, it should not be a big issue. Crosby Grant: Text Ad testing falls somewhere after bidding and account structure & keywords, somewhere near negatives. Of course, every account is different, but in a new account for example, you want to get your structure and keywords right, and your bidding up and running, prior to taking on the optimization tasks that will get you further along. #5 Have you had any surprising text ad testing results? Andrew Goodman: Absolutely. We discover many things. Being “in business for 50 years” can come across as a negative — but being “online since 1997” is a positive. I recently tried an ad that explained how users need to scroll to see a category of product, because the client’s site has a poor experience! That doubled conversion rates! You might learn that saying a food item is “delicious” doesn’t help, but calling it “crunchy” does. You do have to keep testing, because it’s really hard to predict what works. I was gobsmacked when I heard of Jeremy Schoemaker’s claim that the winning ad could just be the one that had a certain *shape* — an “arrow shaped ad”!! http://www.shoemoney.com/2007/02/06/google-adwords-arrow-trick-to-increase-click-through-rates/ I’ve incorporated this gently into some ad tests, and I am pretty sure I’ve seen it working from time to time, for no discernible reason other than just that: the shape. I also strip ads to the bone, trying the game of “shortest ad wins”. Sometimes, it does. I believe this speaks to the cognitive process of users, and also perhaps the minimalism of it flatters searchers who have had enough with the busyness of web pages and the excessive claims and information overload purveyed by the overstuffed world of marketing. Seth Godin has a notion of helping natural selection along in organizations, by “increasing the mDNA diversity” (meme DNA) to allow for serendipity. You’ll never make cool discoveries without accidents, multiple sets of eyes, and even “lazy” ads that people just toss up on the board without overthinking. (Remember how Google’s founders came up with the “ingenious” Google UI because they “weren’t designers and don’t do HTML”?) Having multiple sets of eyes and people with diverse perspectives and expertise trying ad experiments can be a plus for sure . Chad Summerhill: I got 12% overall lift in my brand campaign by adding the ® symbol campaign-wide. . Jessica Niver: Most of my surprising results have revolved around how much different offers (50% off vs. buy one get one free vs. free shipping) impact CTR and conversion rate. I guess it’s logical, but to watch things fluctuate so drastically as a result of changes really demonstrated how important it is to test those things and implement what customers want to me. Also, testing the timing of launch for seasonal or holiday-based ads has been a lesson in how dramatically their performance can change and the importance both of using those types of ads to your advantage and getting them out of your account before they lose value. Learn More About The Authors

He then looked at what, say a 10% increase in Impressions would do to profit compared to a 10% decrease in CPC, and so forth, to show whether you should worry more about increasing Impressions or cutting CPC (this sort of leaves out considering which of those two things is easier to achieve). The overall lesson I took away was that searches basically a linear process – every step is necessary for searchers to move from the query to the page visit to the purchase. I love bashing Craig Danuloff when he says things like “bidding is maybe 10% or 20% of PPC”. No – if all of your bids are set optimally, then bidding is 0% of your problem. If they are all set horribly, then it’s basically 100% of your problem. So, if you’re using only 1 ad format for all of your ad groups (“Looking for [query]? We’ve got [query] at low, low prices!”), then ad testing is very important for you. If you’re doing it well, it should not be a big issue. Crosby Grant: Text Ad testing falls somewhere after bidding and account structure & keywords, somewhere near negatives. Of course, every account is different, but in a new account for example, you want to get your structure and keywords right, and your bidding up and running, prior to taking on the optimization tasks that will get you further along. #5 Have you had any surprising text ad testing results? Andrew Goodman: Absolutely. We discover many things. Being “in business for 50 years” can come across as a negative — but being “online since 1997” is a positive. I recently tried an ad that explained how users need to scroll to see a category of product, because the client’s site has a poor experience! That doubled conversion rates! You might learn that saying a food item is “delicious” doesn’t help, but calling it “crunchy” does. You do have to keep testing, because it’s really hard to predict what works. I was gobsmacked when I heard of Jeremy Schoemaker’s claim that the winning ad could just be the one that had a certain *shape* — an “arrow shaped ad”!! http://www.shoemoney.com/2007/02/06/google-adwords-arrow-trick-to-increase-click-through-rates/ I’ve incorporated this gently into some ad tests, and I am pretty sure I’ve seen it working from time to time, for no discernible reason other than just that: the shape. I also strip ads to the bone, trying the game of “shortest ad wins”. Sometimes, it does. I believe this speaks to the cognitive process of users, and also perhaps the minimalism of it flatters searchers who have had enough with the busyness of web pages and the excessive claims and information overload purveyed by the overstuffed world of marketing. Seth Godin has a notion of helping natural selection along in organizations, by “increasing the mDNA diversity” (meme DNA) to allow for serendipity. You’ll never make cool discoveries without accidents, multiple sets of eyes, and even “lazy” ads that people just toss up on the board without overthinking. (Remember how Google’s founders came up with the “ingenious” Google UI because they “weren’t designers and don’t do HTML”?) Having multiple sets of eyes and people with diverse perspectives and expertise trying ad experiments can be a plus for sure . Chad Summerhill: I got 12% overall lift in my brand campaign by adding the ® symbol campaign-wide. . Jessica Niver: Most of my surprising results have revolved around how much different offers (50% off vs. buy one get one free vs. free shipping) impact CTR and conversion rate. I guess it’s logical, but to watch things fluctuate so drastically as a result of changes really demonstrated how important it is to test those things and implement what customers want to me. Also, testing the timing of launch for seasonal or holiday-based ads has been a lesson in how dramatically their performance can change and the importance both of using those types of ads to your advantage and getting them out of your account before they lose value. Learn More About The Authors

- Brad Geddes – Certified Knowledge

- Andrew Goodman – PageZero

- Jessica Niver – Hanapin Marketing

- Chad Summerhill – PPC Prospector

- Amy Hoffman – Hanapin Marketing

- Erin Sellnow – Hanapin Marketing

- Pete Hall – Room 214, a social media agency

- Ryan Healy – BoostCTR / RyanHealy.com

- Jeff Sexton – BoostCTR / JeffSextonWrites.com

- Tom Demers – BoostCTR / MeasuredSEM

- Bradd Libby – The Search Agents

- Crosby Grant – Stone Temple Consulting

- Rob Boyd – Hanapin Marketing

- Greg Meyers – SEMGeek / iGesso

- Bonnie Schwartz – SEER Interactive

- John Lee – Clix Marketing

- Jon Rognerud – JonRognerud.com

- Joe Kerschbaum – Clix Marketing